The problem

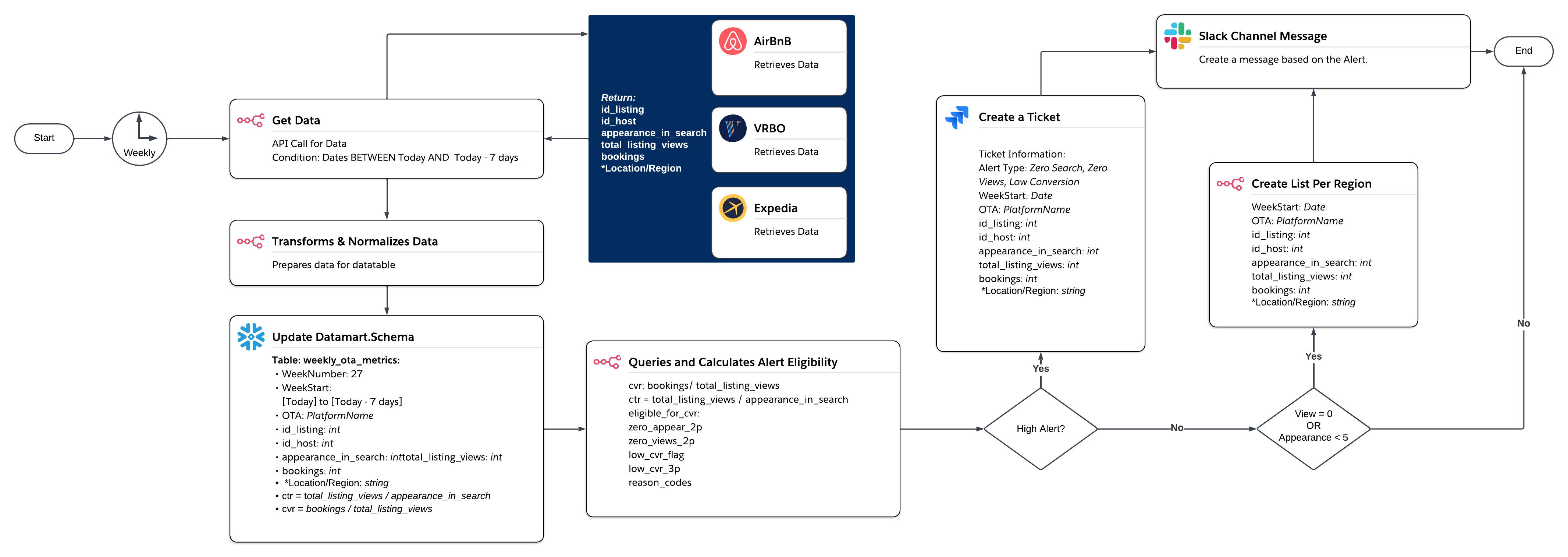

Weekly OTA performance signals are reviewed late, in isolation, and without consistent thresholds.

- Visibility failures (0 appearances / 0 views) are often detected after revenue loss.

- Conversion leakage is fixable, but hard to spot without eligibility + streak logic.

- No integrated framework → blind spots, inconsistent severity, and alert fatigue.